Today, more and more Windows-based devices are touch-friendly, often missing the hardware keyboard completely. Let’s put aside the question how convenient it is to type more than few words only by touch keyboard (it’s a nuisance), Windows has (of course) built-in support for these scenarios.

From the user’s point it works in the way that after a control receives an input focus, the on-screen keyboard automatically pops-up and when the control loses the focus, the keyboard disappears. And it works well for standard Windows controls, but (surprise, surprise) it doesn’t work in .net WinForms. So you end up with customers complaining “It works in Notepad, but in your shiny app I have to always open the on-screen keyboard manually” which is what you really don’t want…

The common practice & how not to do it

Searching on the internet, you’ll find basically only one solution based on manually launching either “osk.exe” or “tabtip.exe“. It somehow works, but still I consider it to be a kind of workaround rather than a solution. Here is why:

- what happens if the location of osk.exe changes in the future version of Windows?

- on 64-bit versions of Windows, you have to make sure you are invoking the correct version of osk.exe

- will it work for users with restricted permissions?

- the keyboard won’t close automatically

- it is not a good programming principle in general, we can do it better

- apparently it doesn’t work on Windows 10 Anniversary

I didn’t believe that Notepad uses the way described above, so I started digging up. The result is described in the next part. But before we can start coding, it is better to set up the environment first.

Setting up the environment

If you have a touch-friendly device such as Surface or Yoga, you can skip the first step.

Step 1

If you don’t have a device equipped with a touch screen, you have to emulate the touch input and use mouse instead.

The first option is to use the Multi-Touch Vista driver which works on all versions of Windows, but I find quite cumbersome to get it running.

The second (and a preferred way of mine) is using the MS Windows Simulator which comes with Visual Studio (2012 and newer) and runs under Windows 8 or newer. You can find it in

C:\Program Files\Common Files\Microsoft Shared\Windows Simulator\14.0\Microsoft.Windows.Simulator.exe (use 'Program Files (x86)' on 64-bit)

The Simulator is primarily intended for developing and testing the Metro (or Modern, Universal, or rebranded for the third time, Windows) apps, but it’s very handy for testing classical desktop applications as well. This is a great tool because all the apps (Visual Studio especially) are available directly in the simulator, so you don’t need to develop and build your app on one place and then test it elsewhere. Using the simulator is quite easy, practically the only thing you need to do is to set the touch mode (the 3. button on the right bar).

Step 2

This is actually relevant only to Windows 10, if you run older Windows, you probably don’t need to set up anything.

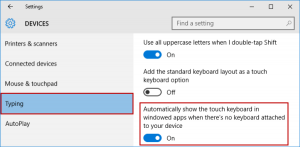

Because you are not developing a Metro app, but a classical desktop app, you won’t probably run Windows in the Tablet mode, but in the old-fashioned Desktop mode. In this mode, the on-screen keyboard does not automatically pop up on text forms, address bars, or anywhere else that you need to type on. Luckily, we can change it, and we can change it under Windows Settings -> Devices -> Typing by checking the option “Automatically show the touch keyboard in windowed apps when there’s no keyboard attached to your device.”

Now, the on-screen keyboard should automatically pop up when opening e.g. Notepad. You can also make sure that it doesn’t work for TextBoxes in WinForms apps.

2 Comments

1. define a process:

private System.Diagnostics.Process keyboardProc;

2. turn on keyboard:

//turn on keyboard

if ((keyboardProc == null) || (keyboardProc.Responding))

{

try

{

//if(!onScreenKeyBoard)

//System.Diagnostics.Process.Start(“C:\\Windows\\System32\\OSK.EXE”);

keyboardProc = System.Diagnostics.Process.Start(“osk.exe”);

}

catch (Exception error)

{

MessageBox.Show(“[” + error + “]”, “Excepion Error”, MessageBoxButtons.OK);

}

}

3. turn off

//turn off keyboard

if (keyboardProc != null)

{

if (!keyboardProc.HasExited)

{

keyboardProc.Kill();

}

else

{

MessageBox.Show(“Need to Manully close the\n On Screen Keyboard”);

}

}

keyboardProc = null;

4. It has to set x64.